Planning to do a generic analysis from the beginning (or to look at the omnibus F test to see if some factor was signficant) is not enough to justify planned contrast.

I am likely missing something here, but I am a bit confused by some of the comments. This is exactly what we expected, but I'm just not sure of legitimacy statistically speaking as far as the post-hocs go. I did some Bonferroni post-hoc tests to sort out the effect I am really interest in: Vehicle familiar vs drug familiar is significant vehicle unfamiliar vs drug unfamiliar is not. I have a significant effect of recognition, and a significant effect of drug treatment, but no significant interaction. The unfamilar condition is basically a control condition to account for changes in general activity. Rats are tested for their ability to recognize an object (familiar or unfamiliar) and receive a drug or vehicle treatment, so I have a 2x2 ANOVA. Does that also mean that you don't need a significant overall effect for a Bonferroni post-hoc? Another person told me that Bonferroni correction eliminates the need for a significant interaction. One person told me that I cannot use a Bonferroni comparison without a significant interaction. I have heard conflicting opinions on Bonferroni. In general, I was wondering if you need a significant main effect or interaction to run various post-hoc tests.įor example, a statistician told me that I did not need a significant main effect to run a Dunnett's test.

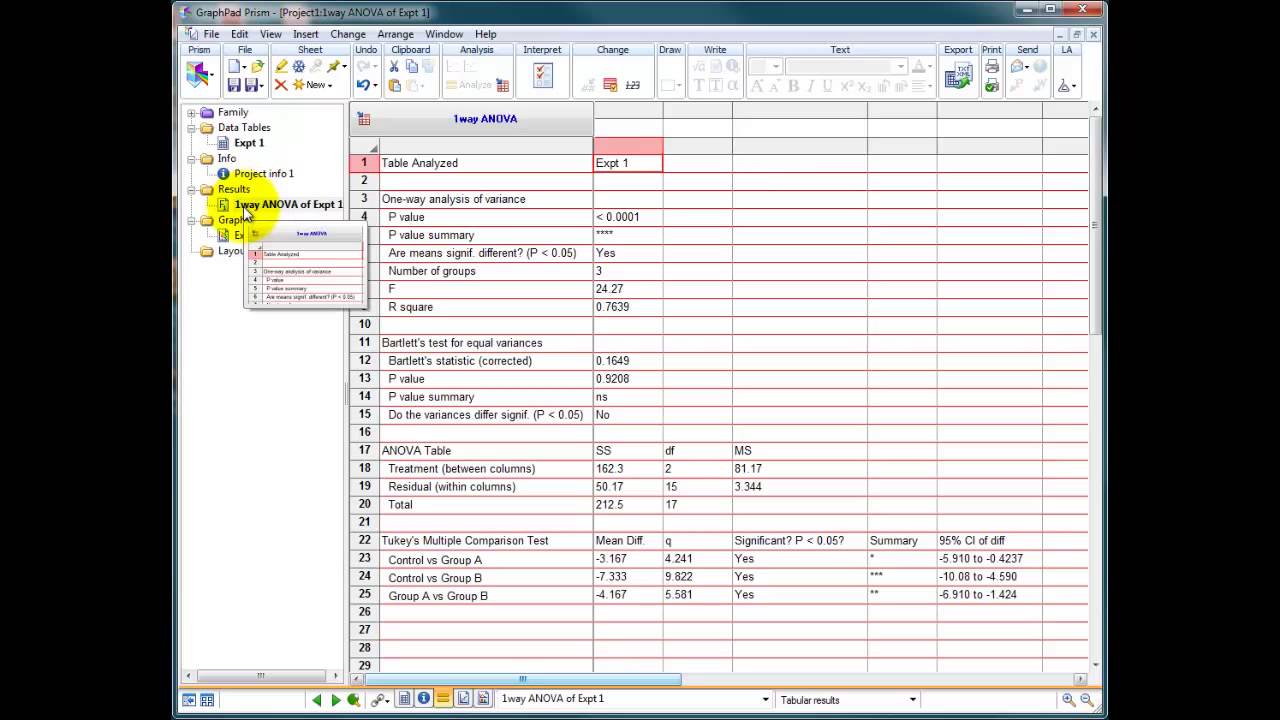

We use the original single step Dunnett method, not the newer step-up or step-down methods.I have a question about ANOVA post-hoc tests in general, with a specific example. Read the details of how these (and other) tests are calculated here.This calculation depends on the value of q, the number of groups being compared, and the number of degrees of freedom. Different tables (or algorithms) are used for the Tukey and Dunnett tests to determine whether or not a q value is large enough for a difference to be declared to be statistically significant.Note that this use of the variable q is distinct from the use of q when using the FDR approach. The only reason to look at these q ratios is to compare Prism's results with texts or other programs. Because of these different definitions, the two q values cannot be usefully compared. For the Dunnett test, q is the difference between the two means (D) divided by the standard error of that difference (computed from all the data): q=D/SED. By historical tradition, this q ratio is computed differently for the two tests. Prism reports the q ratio for each comparison.If you choose 95% intervals, then you can be 95% confident that all of the intervals contain the true population value. This confidence interval accounts for multiple comparisons. Both tests can compute a confidence interval for the difference between the two means.It is possible to compute multiplicity adjusted P values for these tests.These decisions take into account multiple comparisons. The results are a set of decisions: "statistically significant" or "not statistically significant".This gives the test more power to detect differences, and only makes sense when you accept the assumption that all the data are sampled from populations with the same standard deviation, even if the means are different. When you compare mean A to mean C, the test compares the difference between means to the amount of scatter, quantified using information from all the groups, not just groups A and C. This gives you a more precise value for scatter (Mean Square of Residuals) which is reflected in more degrees of freedom. Both tests take into account the scatter of all the groups.The Dunnett test compares every mean to a control mean.Prism actually computes the Tukey-Kramer test, which allows for the possibility of unequal sample sizes. The Tukey test compares every mean with every other mean.They cannot be used to analyze a stack of P values. The Tukey and Dunnet tests are only used as followup tests to ANOVA.Key facts about the Tukey and Dunnett tests If you choose to compare every mean to a control mean, Prism will perform the Dunnett test.

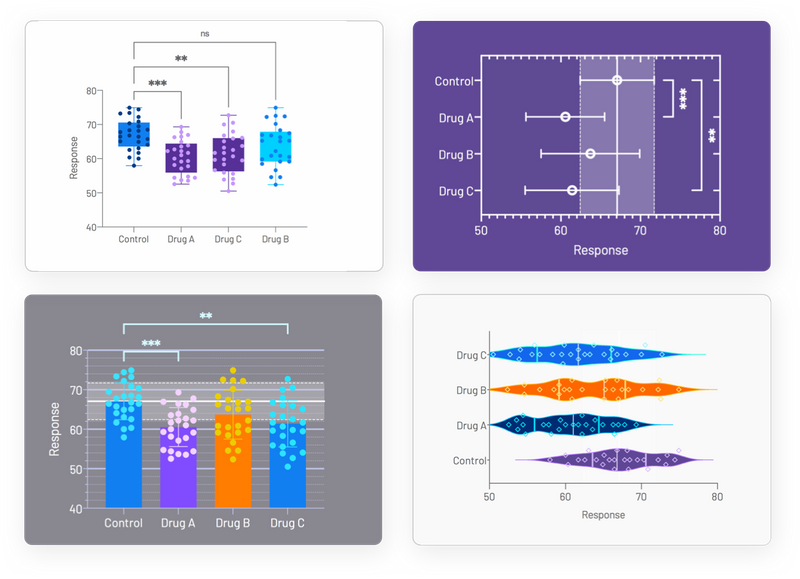

If you choose to compare every mean with every other mean, you'll be choosing a Tukey test. Choose to assume a Gaussian distribution and to use a multiple comparison test that also reports confidence intervals. Prism can perform either Tukey or Dunnett tests as part of one- and two-way ANOVA.